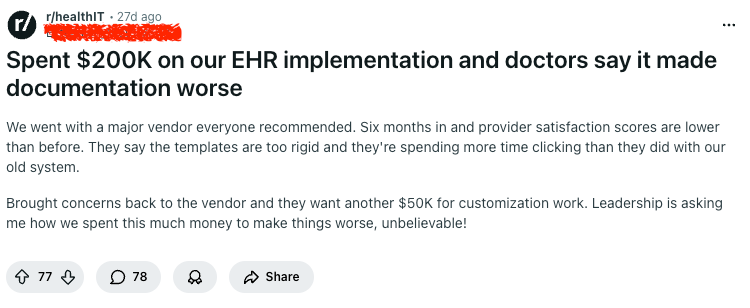

A healthcare operator posted on Reddit that they spent $200K on an EHR implementation, only to end up with lower provider satisfaction, more clicking, and worse documentation. Six months after go-live, the vendor came back with the predictable next step: another $50K for customization.

The comments were almost more interesting than the post.

Some people said this is normal. Others said $200K is actually cheap. A few asked the most important question in the entire thread: were physician leaders involved in the decision and build process at all?

This is usually not a story about one bad vendor. This is really about how healthcare orgs buy and implement software.

Where implementations go wrong

The problem is rarely the software alone

Most failed rollouts get framed as a software problem, especially by leadership after go-live

That was the smartest thread in the comments. Multiple people pointed out that rigid templates, too many clicks, and poor documentation flow usually come from implementation choices, not just the underlying product. Default builds are made for demos and generic requirements. Clinicians work in live environments full of interruptions, shortcuts, and edge cases.

That’s where documentation starts to break down.

The sales process and the real product are different

One commenter said it best: the sales process doesn’t represent the implementation process. That is exactly what happens.

During evaluation, the organization sees polished demos, curated templates, and smooth workflows. The software looks structured, complete, and easy to use. By the time the contract is signed, most teams think they have bought a working system. What they have actually bought is a starting point.

The real work begins after that. Templates need to be rebuilt. Specialty workflows need to be mapped. Analysts need to understand what happens in a live encounter, not what a requirements document says should happen. Providers need to test real documentation flows, not admire mock screens in a vendor call.The problem is that many teams discover this only after go-live, when changing anything becomes slow, political, and expensive.

Documentation gets worse when systems are designed around data capture instead of clinical flow

Most EHR implementations are justified using language like standardization, visibility, data quality, compliance, and reporting. All of those things matter. But none of them reflect how clinicians actually work.

Clinicians care about momentum.

They want to finish a note while the encounter is still fresh. They want the documentation path to follow how they think, not how a committee organized a template. They want the system to get out of the way. That is not what most implementations optimize for.

They optimize for structured fields, complete capture, billing support, downstream reporting, and auditability. But when those goals become the dominant design principle, documentation turns into navigation. The provider stops writing and starts hunting through the interface.

You see this in small ways: a progress note that takes 3 extra clicks, a template that doesn’t match the specialty, a dropdown that forces the wrong structure.

Were physician leaders part of the decision process?

Organizations say providers were involved because a few people attended meetings, reviewed a build, or saw a demo. That is not the same thing as provider-led workflow design. Real involvement means the people documenting every day shape the structure, test the flows, reject what slows them down, and influence the final build before go-live.

Without that, the implementation becomes an exercise in translating clinical work through layers of analysts, project managers, and vendor assumptions. By the time it reaches the screen, it may be “approved.” It is rarely natural.

The real failure is treating go-live as the end of the project

Many teams still think of implementation as a project with a finish line. Sign the contract. Configure the system. Train the users. Go live. Stabilize. Done.

That mental model is wrong. Go-live is when the real evaluation starts.

Before go-live, you have assumptions. After go-live, you have evidence. You can see where providers slow down, where templates do not match the specialty, where analysts misunderstood the actual sequence of work, where the system creates unnecessary clicks, and where training is hiding a workflow flaw.

Before paying another $50K, leadership should be asking a much more basic question: what is actually happening in the room, on the screen, during documentation?

Why the customization quote shows up so often

The extra $50K for customization is not surprising. It is almost part of the business model.

The first implementation gets the customer live. The second round makes it tolerable. Sometimes the third round makes it usable.

By the time the customer is frustrated enough to push back, the vendor has leverage. Switching costs are high. Leadership is already committed. Providers are already trained. The political cost of admitting the first rollout was flawed is high. So the easiest decision is to authorize more spend and hope optimization fixes the problem.

Sometimes it does help. However, if the original design was built without a real understanding of daily documentation, the organization should be very careful before paying to extend that design.

Final thoughts

The most interesting part of the Reddit post was not that a team spent $200K and got a worse documentation experience. It was how normal everyone found it.

That alone should be a red flag.

When an entire comments section responds to a failed rollout with “this is normal,” the problem is bigger than one implementation. The industry has normalized spending heavily on systems that create more friction for the people using them most.

The fix, is a different implementation philosophy. One that starts with real clinician workflows, treats go-live as the beginning rather than the end, and stops assuming that structured software automatically creates better documentation.

Because if documentation gets slower, more rigid, and more painful after implementation, it does not matter how complete the feature set looked in the demo. The system failed where it counts.

Comments